100 Reasons Your Kubernetes Cluster is Crying: Part 5- The Networking Void 🌐

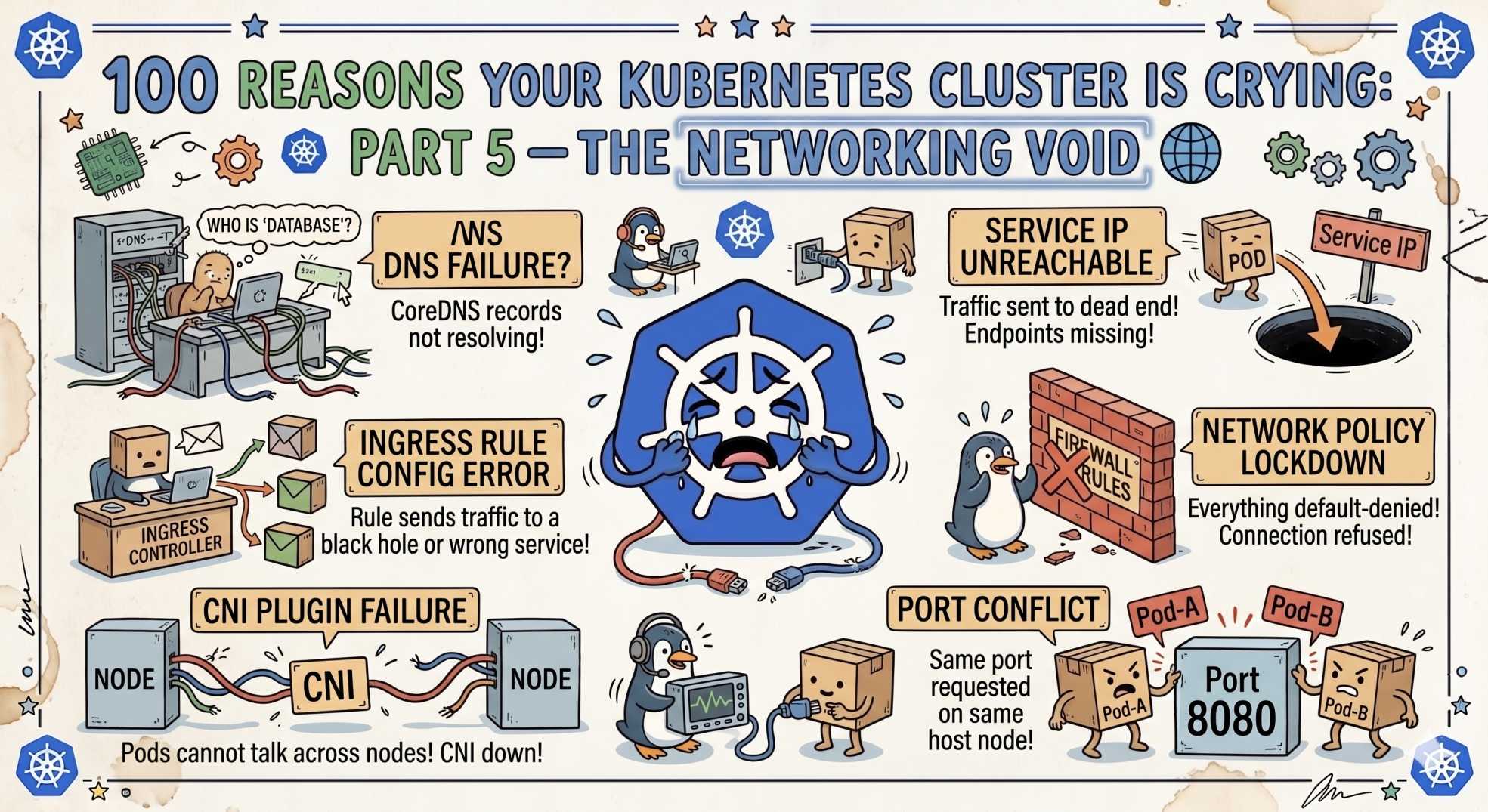

Pods are running, but no traffic flows? Part 5 reveals 10 Kubernetes networking issues, from Service not reachable to DNS failures and CNI breakdowns.

The team at DevOps Inside knows that even if your pod has a home and its "furniture" (storage) is perfectly placed, it’s still useless if nobody can talk to it.

In Part 4, we tackled the "Storage Struggle", those stateful deadlocks that keep your data out of reach.

But now your pod is up, it’s bound to a volume, and yet... nothing. No traffic. No pings. Just a "Connection Refused" staring you in the face.

Following our From Pipelines to Prompts series, we’re moving from the disk to the wire. If your packets are dropping into a black hole, your cluster is just an expensive heater.

The Networking Void

In the SRE world, debugging networking is the ultimate test of patience. It’s the realm of ghost packets and silent drops. Let’s break down the next 10 reasons your cluster is crying over its connectivity.

41. Service Not Reachable: The Unrequited Love 💔

Your Service exists, your Pod exists, but they aren’t talking.

The SRE Reality:

Almost always a selector mismatch. Your Service is looking for app: web-prod, but your Pod says app: web-production.

The Fix:

Run:

kubectl get endpoints <service-name>If it shows <none>, your Service is pointing to nothing. Fix your labels.

42. DNS Resolution Failure: “It’s Always DNS” 🧠

Your app tries to reach auth-service and fails.

The SRE Reality:

CoreDNS is either overloaded or broken.

The Fix:

Check CoreDNS pods in kube-system. If they’re crash-looping, your cluster just lost its phonebook.

43. LoadBalancer Pending: The Cloud Waiting Room ⏳

Your Service is stuck in Pending.

The SRE Reality:

Cloud quota issue or misconfigured subnet.

The Fix:

Check cloud controller logs. It’s usually permissions or quota limits.

44. Connection Refused: The Introvert App 🚫

The network path exists, but the app says no.

The SRE Reality:

Your app is listening on 127.0.0.1.

The Fix:

Bind to 0.0.0.0. Otherwise, nothing outside the container can reach it.

45. Connection Timeout: The Invisible Wall 🧱

Requests just hang.

The SRE Reality:

Blocked by NetworkPolicy or Security Group.

The Fix:

Audit ingress and egress rules. One policy = default deny unless allowed.

46. CNI Plugin Missing: The Engine with No Wheels 🛞

Pods are stuck or have no IP.

The SRE Reality:

CNI plugin (Calico, Cilium, Flannel) is down.

The Fix:

Check CNI daemonset logs. No CNI = no networking.

47. Kube-Proxy Failure: The Traffic Cop Quit 🚦

Service works on some nodes, not others.

The SRE Reality:

kube-proxy failed to update routing rules.

The Fix:

Restart kube-proxy. Consider switching to IPVS if scaling issues appear.

48. Port Mismatch: The Wrong Number ☎️

You call port 80, app listens on 8080.

The SRE Reality:

Mismatch between port and targetPort.

The Fix:

Align targetPort with container port.

49. Headless Service Issues: The Identity Crisis 🧩

Your load balancing "breaks".

The SRE Reality:

Headless services don’t load balance. They return pod IPs.

The Fix:

Use only for stateful apps where direct pod addressing is required.

50. Invalid Ingress Class: The Lost Letter 📭

Ingress is ignored.

The SRE Reality:

Missing or incorrect ingressClassName.

The Fix:

Use:

spec.ingressClassNameAvoid outdated annotations.

🤖 The AI Edge: Self-Healing Networking

In 2026, we’re done manually tracing packets.

AI agents now plug directly into eBPF-powered systems like Cilium. Instead of running tcpdump, they:

- Monitor flow logs in real time

- Detect dropped packets instantly

- Identify broken dependencies

- Suggest policy fixes or auto-generate PRs

Some systems even quarantine noisy services that flood the network with retries.

This turns networking from a black box into a live, observable system.

🧪 Interactive SRE Challenge

Run:

kubectl get svcPick a service, then:

kubectl describe svc <name>Check Endpoints.

If it’s empty, you’ve just found Silent Killer #41.

The Verdict

Kubernetes networking is a layered system:

- Wire → CNI

- Identity → Service

- Address → DNS

Miss one layer, and you spend hours chasing ghosts.

What’s Next

Stay tuned for Part 6, where we move from the wire to the vault.

We’ll break down RBAC and security issues that turn your cluster into a wall of “Permission Denied” errors 🔐

💬Quick Question: What’s the hardest networking bug you’ve ever fixed?

Did an iptables loop ever take down your cluster?

Let’s swap horror stories in the comments.

“In Kubernetes, silence is never peace. If packets aren’t flowing, something is already broken.”