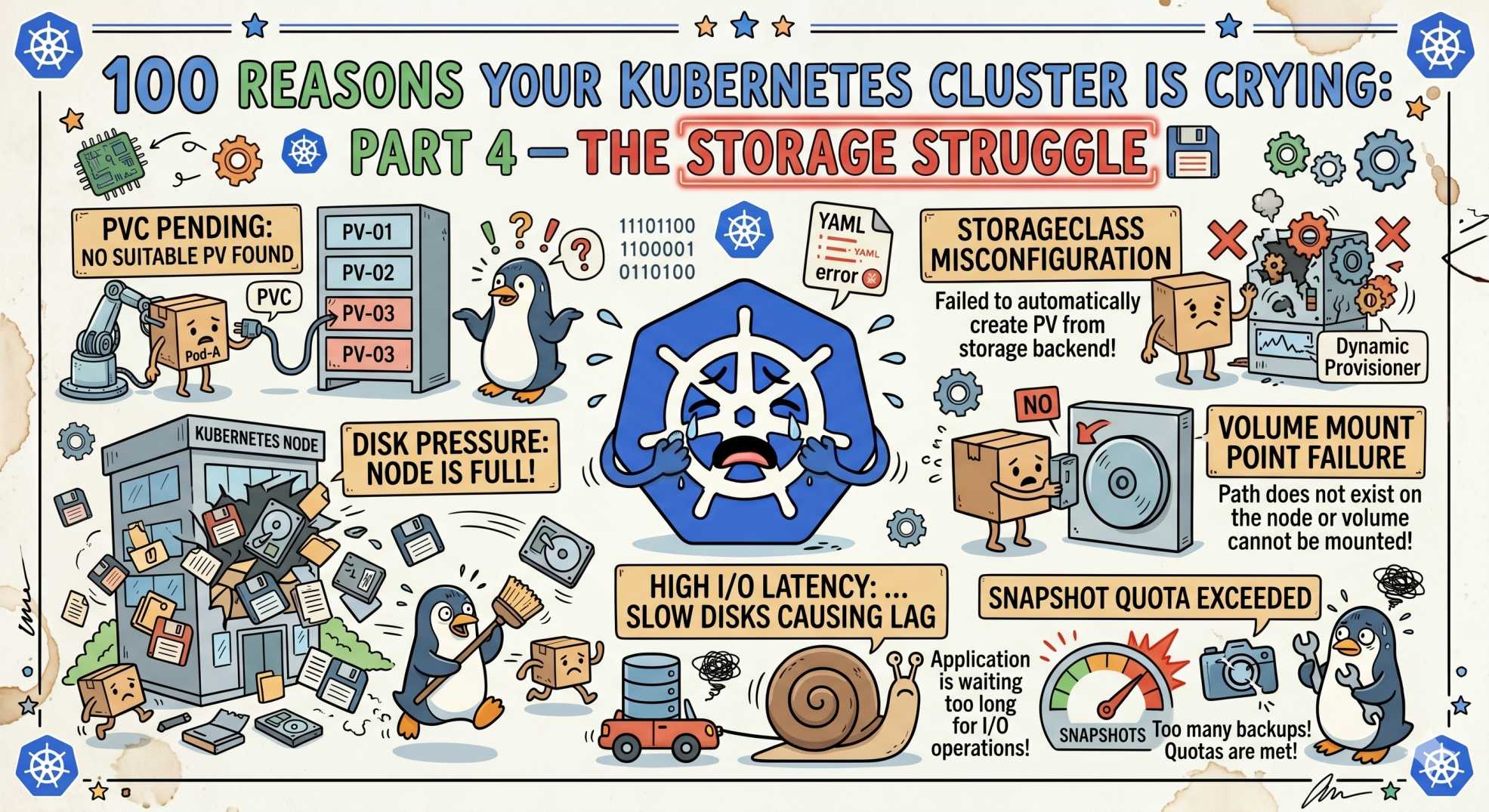

100 Reasons Your Kubernetes Cluster is Crying: Part 4- The Storage Struggle 💾

Pods stuck in ContainerCreating? Part 4 of this Kubernetes series uncovers 10 storage issues like PVC Pending, mount errors, and volume conflicts.

The team at DevOps Inside knows that once the Scheduler finally finds a home for your pod, the real drama begins.

In Part 3, we looked at the No Vacancy sign, those scheduling headaches that keep your pods in a Pending state because the nodes are full.

But what happens when the Scheduler finds a node, and the pod is still stuck because it can’t find its “furniture”?

Following our From Pipelines to Prompts series, we’re moving from the rack to the disk. If your storage layer is broken, your stateful apps are just empty shells.

In the world of SRE, a ContainerCreating status that lasts more than 60 seconds usually points to one thing: a storage conflict.

Your Pod and its PersistentVolume just aren’t getting along.

Let’s dive into the next 10 reasons your cluster is crying over spilled data.

31. PVC Pending: The Mismatched Warehouse 📦

Your PersistentVolumeClaim (PVC) is stuck in Pending, and your pod is going nowhere.

The SRE Reality: You requested a storageClass that doesn’t exist or has unrealistic capacity.

The Fix:

kubectl get storageclass

Check what’s actually available before requesting storage.

32. VolumeMount Mismatch: The Missing Door 🚪

Your pod starts, then crashes with “Directory not found.”

The SRE Reality: Volume defined, but not mounted correctly or on the wrong path.

The Fix:

Double-check mountPath. Kubernetes does exactly what you write, nothing more.

33. Multi-Attach Error: The RWO Tug-of-War ⚔️

Volume already attached to another node.

The SRE Reality: ReadWriteOnce volumes can only attach to one node at a time.

The Fix:

Wait for detach or switch to RWX storage like EFS or Ceph.

34. AccessMode Mismatch: The Contract Violation 📜

You want shared storage, but the backend doesn’t support it.

The SRE Reality: Not all storage types support RWX.

The Fix:

Use the correct storage backend for your access mode.

35. Stale NFS Mounts: The Digital Ghost 👻

Pod is running, but writes hang forever.

The SRE Reality: The NFS server is gone, client is stuck waiting.

The Fix:

Force unmount or restart the node.

36. FSType Mismatch: The Formatting Fight ⚙️

Volume fails to mount due to filesystem mismatch.

The SRE Reality: Node tools don’t support the disk format.

The Fix:

Ensure filesystem type matches your configuration.

37. PV/PVC Binding Issues: The Invisible Wall 🧱

PVC can’t bind to PV.

The SRE Reality: PV is already bound or not released properly.

The Fix:

Check ReclaimPolicy and PV status.

38. Volume Not Found: The Deleted Disk ❌

Kubernetes thinks volume exists, cloud provider disagrees.

The SRE Reality: Disk was deleted manually outside Kubernetes.

The Fix:

Recreate and restore from a snapshot. Avoid manual cloud edits.

39. Permission Denied (UID/GID): The Lockout 🔐

Volume is mounted, but the app cannot write.

The SRE Reality: User mismatch between container and volume.

The Fix:

Use fsGroup in securityContext.

40. MountVolume.SetUp Failed: The Missing Driver 🚧

Pod is stuck with CSI driver errors.

The SRE Reality: CSI driver missing or outdated.

The Fix:

Ensure CSI components are running in kube-system.

🤖 The AI Edge: Predicting Disk Death

In 2026, storage is becoming predictive.

AI agents analyze growth patterns of PVCs and detect anomalies early. Instead of reacting to a full disk, they trigger volume expansion before the outage.

This shifts storage from reactive firefighting to proactive scaling.

⚡ Interactive SRE Challenge

Run this:

kubectl get pvc -A

Do you see any PVCs in Pendthe ing or Lost state?

Check events. Is it capacity, StorageClass, or something deeper?

The Verdict

Storage is the heaviest layer in Kubernetes.

Once you attach a volume, your pod is no longer fully disposable. It becomes stateful and fragile.

Treat storage as a first-class citizen, or it will break your cluster when you least expect it.

🔥 What’s Next

Stay tuned for Part 5: The 'Network Nightmare', where we move from disk to wire and debug why your traffic disappears into a black hole 🌐

💬Quick Question: What’s the most frustrating storage issue you’ve ever faced? Did a multi-attach deadlock ever keep you up all night?

Let’s swap horror stories in the comments.

“Stateless apps fail fast. Stateful apps fail forever.”