100 Reasons Your Kubernetes Cluster is Crying: Part 3- The No Vacancy Sign 🚫

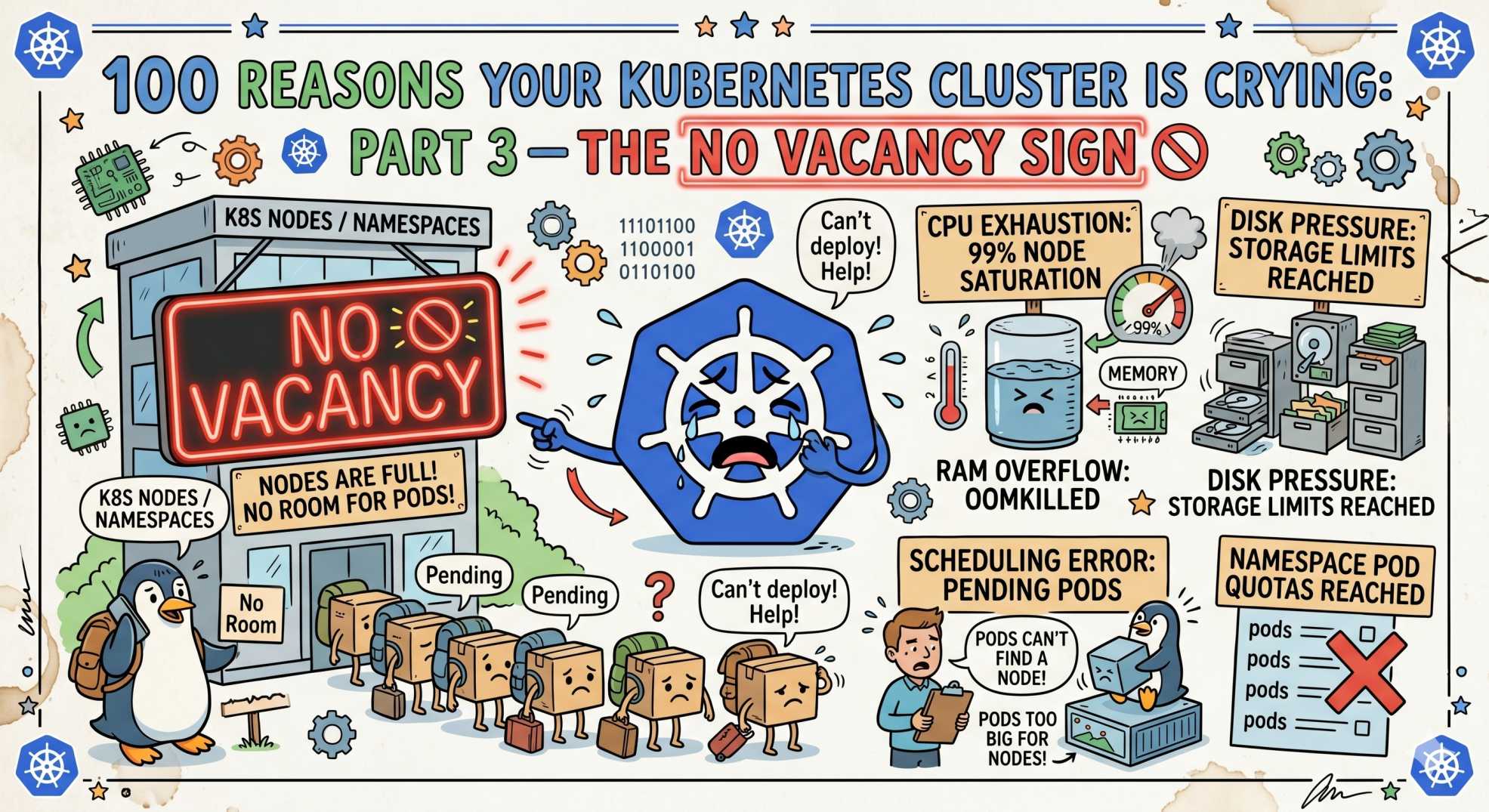

Pods stuck in Pending? Part 3 of this Kubernetes series reveals 10 scheduling issues like NodeNotReady, resource limits, and taints blocking workloads.

The team at DevOps Inside knows that even if you have the perfect container image and a flawless registry, your pod still needs a home.

In Part 2, we tackled the Registry Redline, those annoying pull errors that keep your code stuck in the cloud.

But once the image is pulled, the Kubernetes Scheduler has to find a node with enough room at the inn.

Following our From Pipelines to Prompts series, we’re moving from the source of truth to the reality of the rack. If your nodes are stressed, your pods are going nowhere.

In the SRE world, a pod in a Pending state is the ultimate cliffhanger.

It’s the “I’m ready to work, but I have nowhere to sit” energy.

Let’s dive into the next 10 reasons your cluster is secretly sobbing because its nodes are under pressure.

21. PodUnschedulable: The Mathematical Wall 🧮

Your pod is stuck in Pending because the Scheduler can’t find a node that satisfies all requirements.

The SRE Reality: You’ve requested more resources than any node can provide.

The Fix:

kubectl describe pod <pod-name>

Look for FailedScheduling. Messages like “Insufficient CPU” or “Insufficient memory” tell you exactly what’s wrong.

22. NodeNotReady: The Ghost in the Machine 👻

The node exists, but it has stopped reporting its heartbeat.

The SRE Reality: Kubelet crash or underlying VM failure.

The Fix:

Check the instance health. If SSH fails, the node is likely gone or reclaimed.

23. Taint/Toleration Mismatch: The VIP Section 🎟️

Your pod is trying to land on a restricted node.

The SRE Reality: Node is reserved for special workloads like GPU jobs.

The Fix:

Match tolerations with node taints. Without it, your pod is blocked.

24. Affinity Conflicts: The “I Hate My Neighbor” Rule 🚷

Your rules are too strict, leaving no valid node.

The SRE Reality: You ran out of eligible nodes due to hard anti-affinity.

The Fix:

Use preferredDuringSchedulingIgnoredDuringExecution instead of strict rules.

25. ResourceQuota Exceeded: The Budget Ceiling 💸

Cluster has space, but your namespace doesn’t.

The SRE Reality: You hit a quota limit set by the platform team.

The Fix:

Clean unused resources or request a quota increase.

26. DiskPressure: The Log Hoarder 📦

Node disk is almost full.

The SRE Reality: Logs or image cache grew uncontrollably.

The Fix:

Rotate logs and clean unused images. Kubernetes avoids scheduling on such nodes.

27. MemoryPressure: The Silent Choke 🧠

Node is running out of RAM.

The SRE Reality: Too many burstable pods without limits.

The Fix:

Always define memory limits to prevent starvation.

28. PIDPressure: The Process Leak 🔁

Node cannot create new processes.

The SRE Reality: Fork bombs or excessive threads hit system limits.

The Fix:

Configure pod-max-pids to protect the node.

29. Node OutOfDisk: The Hard Stop 🛑

The disk is full.

The SRE Reality: Root filesystem is clogged.

The Fix:

Free space manually or expand storage.

30. Insufficient CPUs: The Core Crunch ⚙️

Your pod requests more CPU than is available.

The SRE Reality: Scheduler cannot guarantee resources.

The Fix:

Audit CPU requests. Most services are over-provisioned.

🤖 The AI Edge: Autonomous Bin-Packing

In 2026, manual resource guessing is fading.

AI agents now analyze historical usage and automatically adjust resource requests. This improves scheduling efficiency and reduces cloud costs.

Some teams are saving up to 40% on infrastructure bills by using AI-driven right-sizing.

⚡ Interactive SRE Challenge

Run this:

kubectl get nodes

If any node is not Ready, run:

kubectl describe node <node-name>

Check the Conditions section. Is it Pressure or something deeper?

The Verdict

Scheduling is a giant game of Tetris.

If you don’t define proper Requests and Limits, the Scheduler will eventually run out of moves.

🔥 What’s Next

Stay tuned for Part 4: The 'Storage Struggle', where we move from nodes to volumes and break down why your PVCs stay stuck in Pending.

💬 Quick Question: What’s the weirdest reason a node of yours ever went NotReady?

Let’s swap stories in the comments.

And seriously… why is it always the node with the most DiskPressure running your most critical database? 😭

“A pod without a node is just a promise. And promises don’t handle production traffic.”