The Silent Bill-Killers: 5 Kubernetes Secrets That Are Draining Your Budget

Is your Kubernetes cluster secretly wasting money? Discover 5 silent issues like CPU throttling, DNS errors, and over-provisioning hurting performance and cost.

At DevOps Inside, we’ve spent years digging through the debris of “healthy” clusters that were quietly burning money like a bonfire. You know the ones. Dashboards are green, pods are running, and yet the cloud bill looks like a phone number from another country.

Let’s do a quick reality check.

✋ Raise your hand if you’ve ever looked at a perfectly green Grafana dashboard and thought, “Something is definitely wrong here.”

Exactly.

Welcome to the Silent Killers edition of your cluster audit. Let’s find out whether your infrastructure is actually stable or just very good at hiding its problems.

01. The DNS “Deep Freeze” ❄️

The Scenario:

You deploy a new microservice. Everything looks fine. But the logs are silent. No errors. No stack traces. Just nothing.

The Secret:

One small typo in your FQDN.

For example, payment-gw.internal.prod instead of payment-gateway.internal.prod.

Interactive Check:

Run:

If pods stay in “Initializing” or “ContainerCreating” with no logs for minutes, you are not dealing with a code issue. You are stuck in a DNS resolution loop.

The Cost:

Your app is consuming CPU and memory while doing absolutely nothing except waiting for a response that will never arrive.

02. The Ghost of CPU Throttling 👻

This is where things get frustrating.

Your metrics show 40 percent CPU usage. Looks safe, right?

The Secret:

You are likely being throttled at the kernel level by CFS. Standard metrics hide short bursts of throttling, which still hurt performance.

Interactive Check:

Ask yourself:

- Are you tracking

container_cpu_cfs_throttled_periods_total? - Have you checked

CPU.statinside the container?

If not, your application might be getting paused thousands of times per second.

The Cost:

Higher latency, slower responses, and wasted compute that looks “healthy” on dashboards but performs poorly in reality.

03. The Taint of “Silent Eviction” ⚠️

The Scenario:

A pod restarts every 30 to 60 minutes.

Liveness probes are fine. No OOMKills. Everything looks normal.

The Secret:

Node taints and missing tolerations.

A node might have a NoExecute or similar taint that your pod does not tolerate. Kubernetes quietly evicts it and reschedules elsewhere.

Interactive Check:

Run:

Look for taints and compare them with your pod tolerations.

The Cost:

Every eviction kills warm cache, restarts services, and increases load during startup. You are paying for instability, not compute.

04. The Zombie CronJob Apocalypse 🧟

This one is more common than people admit.

The Scenario:

Jobs that started months ago are still “Running.”

The Secret:

Missing activeDeadlineSeconds or proper failure handling.

The job never completes, never fails, and never triggers alerts. It just stays alive forever.

Interactive Check:

Run:

If you see jobs running for weeks or months, you have zombies.

The Cost:

Persistent volumes, memory, and compute are tied up in workloads that serve zero purpose.

05. The “Golden Child” Syndrome 👑

The most dangerous one because it looks perfect.

The Scenario:

A stable service with zero incidents for years. Running 10 replicas “for safety.”

The Secret:

Massive over-provisioning.

Requests and limits were set during a peak load test years ago and never updated.

Interactive Check:

Compare:

- CPU and memory usage

- Requested resources

If usage is under 10 percent most of the time, you are overpaying.

The Cost:

You are paying for capacity you never use.

The Smarter Approach:

Use Vertical Pod Autoscaler or intelligent right-sizing.

Modern systems can analyze traffic patterns and suggest better configurations automatically.

The DevOps Inside Audit Challenge 🚀

Enough theory.

Go to your terminal right now and run:

Now, be honest.

If that list is longer than your coffee bill this morning, your cluster is not as healthy as it looks.

Final Thought

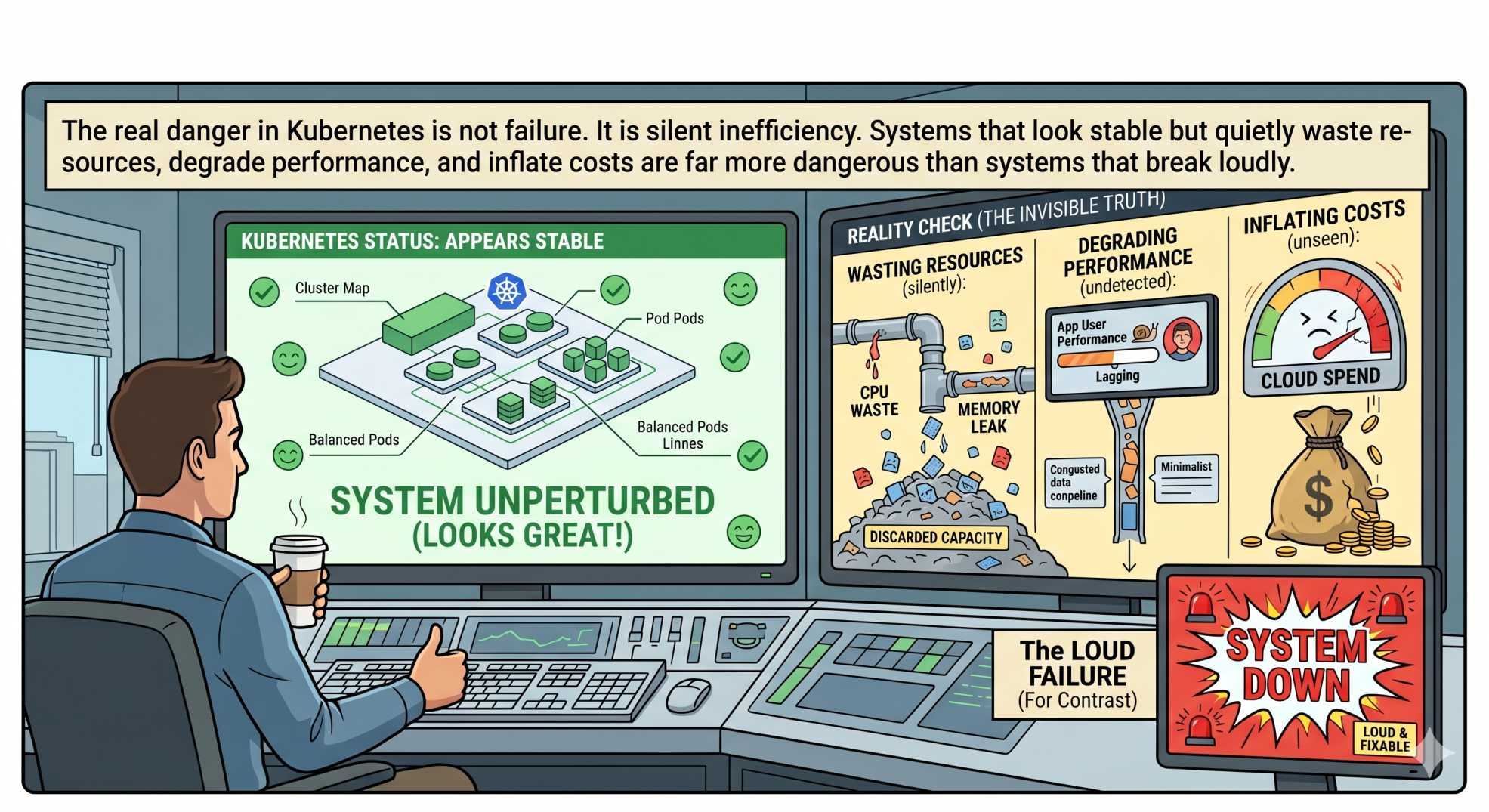

The real danger in Kubernetes is not failure.

It is silent inefficiency.

Systems that look stable but quietly waste resources, degrade performance, and inflate costs are far more dangerous than systems that break loudly.

💬 Quick Question: What is the most expensive “invisible bug” you’ve seen in production? A typo, a zombie job, or something worse?

Let us know in the comments!

“Broken systems get fixed. Silent systems get paid for.”