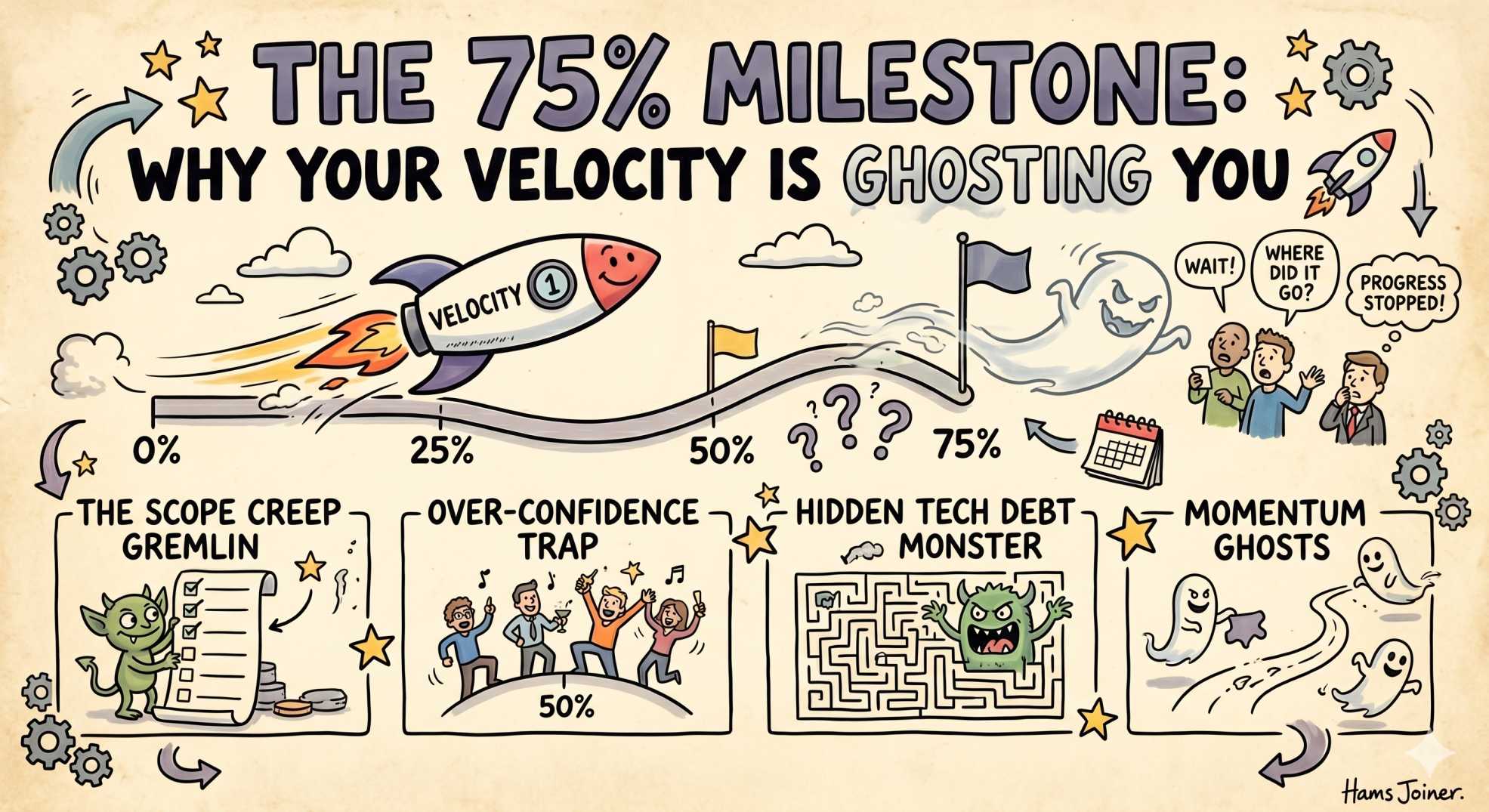

The 75% Milestone: Why Your Velocity is Ghosting You 🚀

AI writing code faster than engineers can review it? This blog explores the AI Productivity Paradox, where coding agents accelerate software development but shift the real bottleneck to architecture reviews, debugging, security validation, and operational risk analysis.

The team at DevOps Inside knows that we’ve spent the last few weeks debugging the "100 Reasons Your Cluster is Crying." We’ve looked at everything from exit codes to the "Deep State" of the control plane.

But while we’ve been busy fixing infrastructure, the way we build that infrastructure has quietly shifted beneath our feet.

Recently, major tech giants revealed a staggering reality: 75% of new code is now AI-generated.

Following our "From Pipelines to Prompts" series, we have to ask a dangerous question:

If the bots are doing the heavy lifting, why are engineers still drowning in 50-hour workweeks?

Welcome to the AI Productivity Paradox.

⚡ The AI Productivity Paradox

In the SRE world, velocity used to mean turning a Jira ticket into a merged Pull Request as quickly as possible.

Today?

That Pull Request is probably generated by a coding agent before you even finish your first coffee.

And yet, despite this explosion in generated code, something strange is happening:

Teams are not shipping dramatically faster.

The bottleneck moved.

We traded the Writing Phase for the Audit Phase.

And it turns out reading AI-generated systems is often harder than writing them manually.

🧠 The Paradox: More Code, Less "Ship"

If an AI can generate a 200-line Terraform provider in three seconds, sprint velocity should be exploding… right?

The Reality

The cognitive load did not disappear.

It simply changed form.

🔥 The SRE Example

An AI agent generates a beautiful Helm chart for your new microservice.

Everything looks perfect.

Until Kubernetes rejects the deployment because the AI accidentally included a hostPath mount that violates your OPA/Gatekeeper policy.

Now the clock starts ticking.

⏳ The Time Sink

Instead of writing YAML, you spend forty minutes debugging:

- Admission controller failures

- Security policy conflicts

- Invalid architecture assumptions

- Hidden infra anti-patterns

And here’s the painful part:

If you had written the YAML yourself, you probably would have known exactly where the issue lived.

That is the paradox.

AI accelerates generation.

But generation is no longer the expensive part.

🏗️ From 'Author' to 'Architectural Auditor'

The 75% Milestone marks the unofficial death of the "Junior Typist" era of engineering.

We are no longer valued primarily for typing syntax.

We are valued for:

- Systems thinking

- Architecture decisions

- Failure prediction

- Production intuition

- Risk detection

🎯 The Skill Shift

Your value in 2026 will not come from memorizing:

- Go syntax

- Terraform arguments

- Kubernetes flags

- AWS resource names

It will come from understanding:

- Why architectures fail

- How systems behave under pressure

- What breaks at 3 AM

- Which "perfect-looking" AI output hides operational disaster

👻 The Silent Hallucination

AI is excellent at syntax.

It is mediocre at operational judgment.

For example:

- It may recommend

ReadWriteOncestorage for a workload that clearly requiresReadWriteMany - It may scale stateless apps correctly, but ignore regional failover patterns

- It may optimize for speed while quietly violating security policy

The danger is not broken syntax.

The danger is architectural drift that looks correct.

🤖 The AI Edge: Intelligent Validation

This is where Intelligent Automation becomes critical.

If AI writes the code, we need systems capable of validating intent, not just syntax.

🧩 Context-Aware Linting

Traditional linters check:

- Brackets

- Imports

- Formatting

- Static rules

AI-driven validation checks:

- Reliability assumptions

- Security intent

- Disaster recovery patterns

- Organizational architecture standards

We’re already seeing tools evolve from:

“This code is invalid.”

to:

“This code is valid, but it bypasses the failover strategy your platform team defined six months ago.”

That is a completely different level of engineering intelligence.

🛰️ The Interactive SRE Reality Check

Take a look at your last three merged Pull Requests.

Ask yourself honestly:

- What percentage was prompted versus manually typed?

- How much time did you spend reviewing AI output?

- How much mental energy went into verifying that the generated logic would not trigger a P0 incident?

Because if engineers now spend:

- 20% generating

- 80% validating

…then the role has fundamentally changed.

You did not automate yourself out of the job.

You evolved into:

A Full-Time Architectural Reviewer.

🧠 The Verdict

Human-only engineering is not dead.

But it is becoming a boutique skill.

Like a master watchmaker assembling gears by hand, there will always be environments where handcrafted logic matters:

- Kernel engineering

- Critical infrastructure

- Ultra-sensitive security systems

- High-performance distributed systems

But for most teams, the 75% milestone is not about writing less code.

It is about thinking more deeply about the code that already exists.

The teams that win in the AI era will not be the fastest typists.

They will be the fastest thinkers.

Because the productivity paradox only breaks when engineers stop trying to out-type the AI…

…and start learning how to out-think it.

💬 Final Thoughts

Are you actually shipping faster with AI?

Or are you simply spending more time trapped in Code Review Hell™?

Has AI reduced engineering effort…

…or just moved the stress into a different phase of the workflow?

Let’s debate the paradox in the comments.

And more importantly:

What does the next generation of engineering even look like when code becomes the easy part?

"The future’s best engineers will not be the ones who write the most code. They will be the ones who understand the consequences of it fastest."