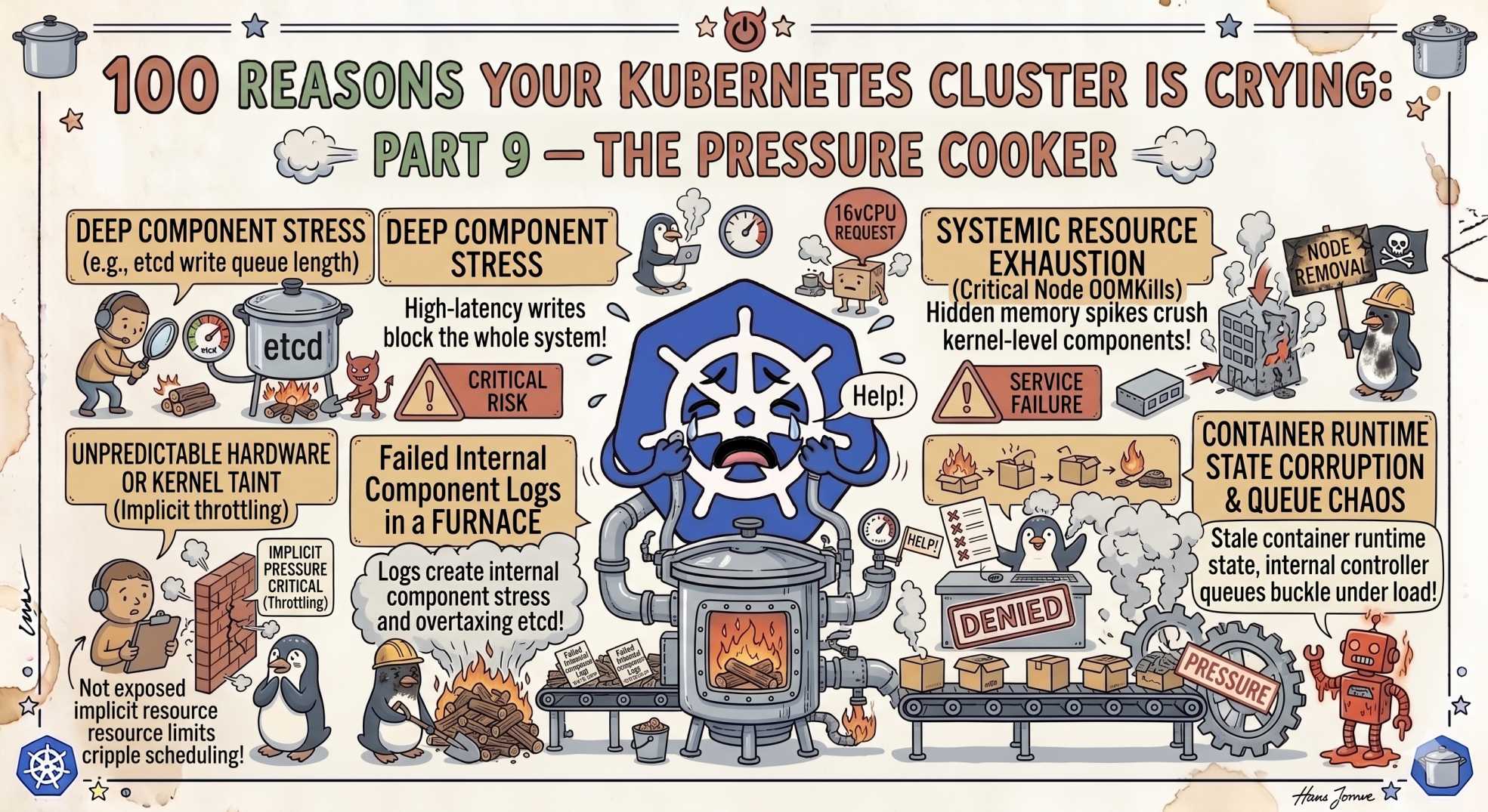

100 Reasons Your Kubernetes Cluster is Crying: Part 9- The Pressure Cooker

Nodes under pressure and pods getting evicted? Part 9 reveals 10 Kubernetes node failures, from hard evictions and CPU starvation to DNS bottlenecks and conntrack limits.

The team at DevOps Inside knows that if the Control Plane (the brain) we explored in Part 8 is the thinker, the Node is the doer. But even a genius brain cannot save a body that is collapsing under its own weight.

Following our "From Pipelines to Prompts" series, we’re moving from the "Deep State" of the API server to the physical reality of the "Pressure Cooker." If your nodes are gasping for air, they will start sacrificing your pods to save themselves.

Here is Part 9: 10 reasons your nodes are under too much pressure.

In the SRE world, "Node Pressure" is the equivalent of a Code Red 🚨

It’s when the OS and the Kubelet stop being polite and start getting physical.

Let’s dive into the next 10 reasons your cluster is sobbing as it tries to keep its head above water.

81. Hard Eviction Triggered: The Immediate Eviction

Your node has hit a critical resource threshold, usually disk or memory, and it’s not asking nicely anymore.

The SRE Reality:

Kubernetes will kill pods immediately without a grace period. It becomes "Save the Node" at any cost.

The Fix:

This is a fire drill. Scale your node group or move workloads manually. Check memory.available or nodefs.available in your Kubelet configuration.

82. Soft Eviction: The Polite Request

The node is starting to feel the heat, but it gives your pods a moment to "pack their bags."

The SRE Reality:

Kubernetes honors terminationGracePeriodSeconds before killing the pod. This is your warning shot.

The Fix:

Audit your eviction-soft thresholds. If this happens often, your nodes are consistently over-provisioned.

83. Noisy Neighbor (CPU): The Resource Thief

One pod hogs CPU cycles, slowing everything else down.

The SRE Reality:

This happens when pods have no CPU limits. They burst and steal resources.

The Fix:

Always define CPU limits. Use metrics like cpu.cfs_throttled_us to detect throttling.

84. Kernel Panics: The Hard Crash

The Linux kernel hits an unrecoverable error and stops.

The SRE Reality:

The DevOps version of a system crash. Often caused by hardware issues, bad drivers, or memory corruption.

The Fix:

Check dmesg or /var/log/syslog. In cloud setups, terminate the node and let autoscaling replace it.

85. Zombie Pods: The Walking Dead

The pod shows "Running," but the container process is gone.

The SRE Reality:

The Kubelet lost sync with the runtime. It creates ghost workloads.

The Fix:

Restart the Kubelet. If it persists, investigate container runtime issues.

86. Image GC Failure: The Digital Hoarder

Disk fills up because old images are never deleted.

The SRE Reality:

Image garbage collection is misconfigured.

The Fix:

Lower image-gc-high-threshold (for example, 80%). Let cleanup happen earlier.

7. Conntrack Table Full: The Traffic Jam

Requests start dropping even though everything looks healthy.

The SRE Reality:

The Linux connection tracking table is full.

The Fix:

Increase net.netfilter.nf_conntrack_max via sysctl.

88. NodeLocal DNSCache Misses: The Resolution Bottleneck

DNS becomes slow across the node.

The SRE Reality:

Too many DNS queries overload CoreDNS and conntrack.

The Fix:

Deploy NodeLocal DNSCache to offload DNS traffic locally.

89. HugePages Mismatch: The Oversized Luggage

Your app needs HugePages, but the node cannot provide them.

The SRE Reality:

HugePages must be pre-allocated at the OS level.

The Fix:

Pre-allocate HugePages using DaemonSets or custom images.

90. Swap Enabled: The Forbidden Fruit

The node becomes NotReady because swap is enabled.

The SRE Reality:

Swap breaks Kubernetes resource guarantees.

The Fix:

Disable swap using swapoff -a. If using newer Kubernetes versions, configure it explicitly.

🤖 The AI Edge: Anomaly Detection vs. Static Alerts

In 2026, we are moving beyond static alerts like "CPU > 80%."

AI agents now perform Behavioral Anomaly Detection.

Instead of reacting late, they learn normal patterns. If memory suddenly behaves differently, even at 40%, they flag it early. This helps prevent issues like noisy neighbors and memory leaks before they trigger evictions.

It turns the "Pressure Cooker" into a system that vents itself before exploding.

⚡ Interactive SRE Challenge

Run:

kubectl get pods -A | grep -i "evicted"If you see results, pick one and run:

kubectl describe pod <pod-name>Was it DiskPressure or MemoryPressure?

🧠 The Verdict

The Node is where everything becomes real.

If you ignore OS limits and hardware constraints, the Kubelet will eventually take control and start killing workloads to survive.

Respect the machine, or the machine will make decisions for you.

🚀 What’s Next?

Stay tuned for Part 10, the grand finale, where we move into future-proofing your cluster with AI-driven observability and best practices.

💬 Quick Question: What’s the most "violent" eviction you’ve ever seen? Did a Conntrack limit ever take down your entire API?

Let’s swap the "Pressure Cooker" stories in the comments.

"A Kubernetes node doesn’t fail suddenly. It warns you quietly, then punishes you violently."