100 Reasons Your Kubernetes Cluster is Crying: Part 8- The Deep State🧠

API server unresponsive or cluster acting dead? Part 8 reveals 10 Kubernetes control plane failures, from ETCD crashes and expired certificates to webhook loops and version skew issues.

The team at DevOps Inside knows that even if your "Seesaw" (scaling) is balanced, you’re still in deep trouble if the cluster’s brain starts to malfunction.

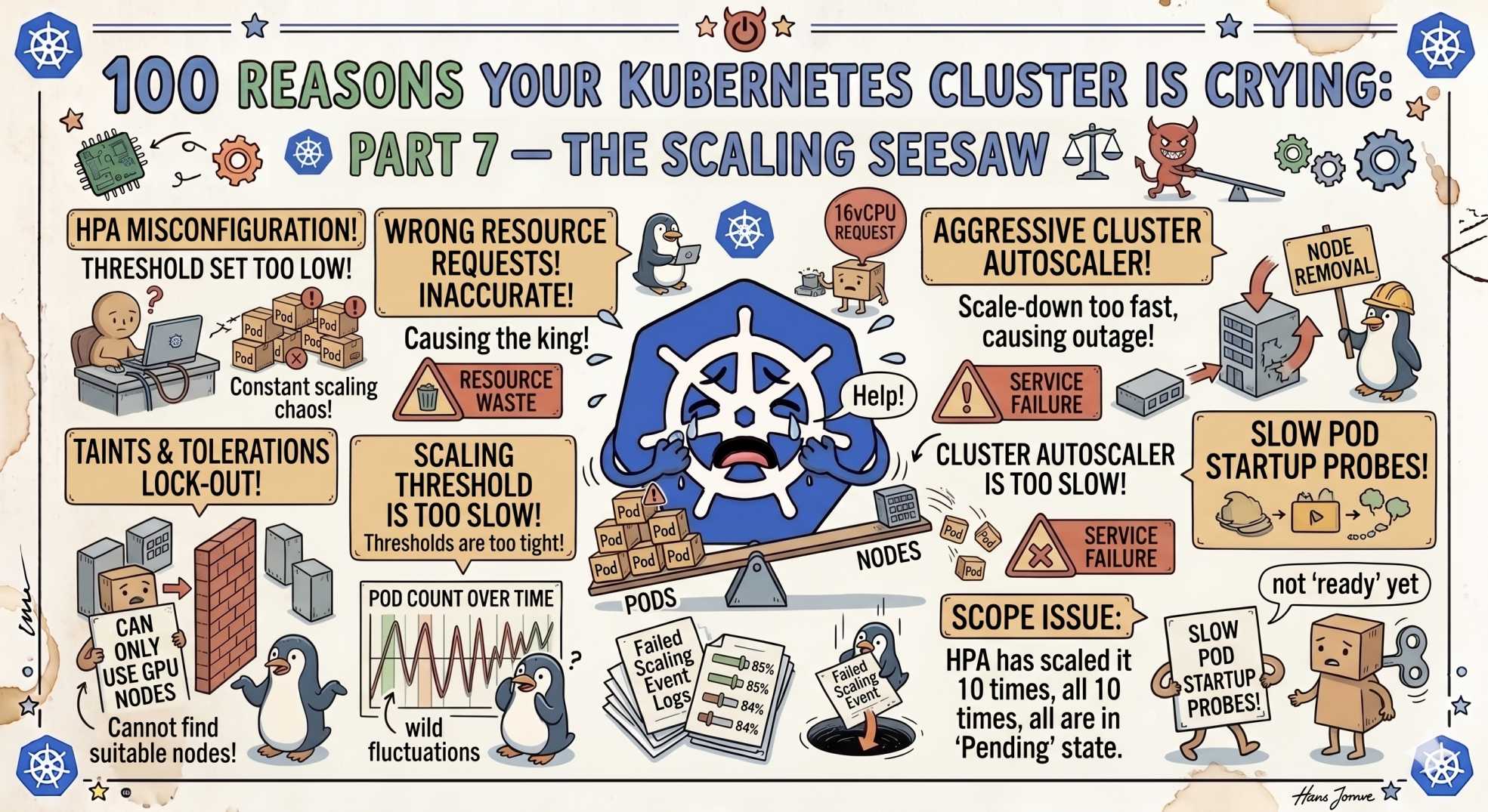

In Part 7, we tackled the "Scaling Seesaw", those frustrating moments when your HPA flaps or your rollouts just stop.

But now, we’re diving deeper into the infrastructure. It’s one thing when an app fails. It’s another when the Control Plane itself starts gasping for air.

Following our "From Pipelines to Prompts" series, we’re moving from the application layer to the "Deep State" of Kubernetes. If your API server is ghosting you, your cluster isn't just crying, it’s in a coma.

Here is Part 8: 10 reasons your control plane is losing its mind.

71. API Server Not Reachable: The Ultimate Ghosting 👻

You run kubectl and get nothing. Just a timeout.

The SRE Reality:

If the API server is down, your cluster is effectively dead.

The Fix:

Check:

- control plane node health

- load balancer status

- kube-apiserver logs

72. ETCD Leader Loss: The Existential Crisis 🧬

Your cluster stops updating.

The SRE Reality:

ETCD lost its leader due to latency or partitioning.

The Fix:

Check disk I/O performance. Slow disks kill ETCD stability.

73. Certificate Expired: The "Yearly Tax" ⏰

Everything worked yesterday. Today, nothing connects.

The SRE Reality:

Certificates expired.

The Fix:

Run:

kubeadm certs check-expiration74. Webhook Feedback Loops: The Infinite Mirror 🔁

Requests spiral out of control.

The SRE Reality:

Your webhook keeps modifying the same object repeatedly.

The Fix:

Ensure idempotent webhook logic.

75. Leaked Finalizers: The Namespace Limbo 🕳️

A namespace refuses to die.

The SRE Reality:

A finalizer is blocking deletion.

The Fix:

kubectl patch <resource> -p '{"metadata":{"finalizers":null}}' --type=merge76. Version Skew: The Generational Gap 📉

Components stop communicating.

The SRE Reality:

Versions are too far apart.

The Fix:

Keep within the supported skew. Upgrade the control plane first.

77. CRD Missing: The Ghost Resource 👁️

Kubernetes rejects your resource.

The SRE Reality:

CRD is missing.

The Fix:

Reinstall the required CRD before applying resources.

78. Proxy ARP Issues: The Network Loop 🔄

Traffic disappears inside the node.

The SRE Reality:

CNI misconfiguration causes routing loops.

The Fix:

Audit your CNI bridge and network settings.

79. Too Many Open Files: The OS Choke 📂

The API server starts failing under load.

The SRE Reality:

You hit file descriptor limits.

The Fix:

Increase system limits (ulimit -n).

80. OIDC Provider Out of Sync: The Clock Conflict ⏱️

Users cannot authenticate.

The SRE Reality:

Time drift invalidates tokens.

The Fix:

Ensure NTP or chrony is synced across nodes.

🤖 The AI Edge: Autonomous Control Plane Auditing

In 2026, control plane failures are no longer surprises.

AI agents now:

- monitor API request patterns

- detect anomalies early

- predict certificate expiry

- isolate misbehaving components

Instead of reacting, systems are now self-defending.

⚡ Interactive SRE Challenge

Run:

kubectl get --raw='/readyz'If you don’t get "ok", your API server is already struggling.

🧠 The Verdict

The control plane is not just infrastructure.

It is the soul of your cluster.

If ETCD fails, nothing persists.

If the API server fails, nothing responds.

If webhooks fail, everything slows down.

Understanding this layer is what separates operators from real SREs.

🔜 What’s Next?

Stay tuned for Part 9, where we move from the Brain to the Body, debugging the "Pressure Cooker" of resource limits and node evictions.

💬 Quick Question: What’s the most "impossible" control plane bug you’ve ever fixed? Did an expired certificate ever take down your entire system?

Let’s swap "Deep State" stories in the comments.

"When the control plane breaks, Kubernetes doesn’t degrade. It disappears."