100 Reasons Your Kubernetes Cluster is Crying: Part 7- The Scaling Seesaw ⚖️

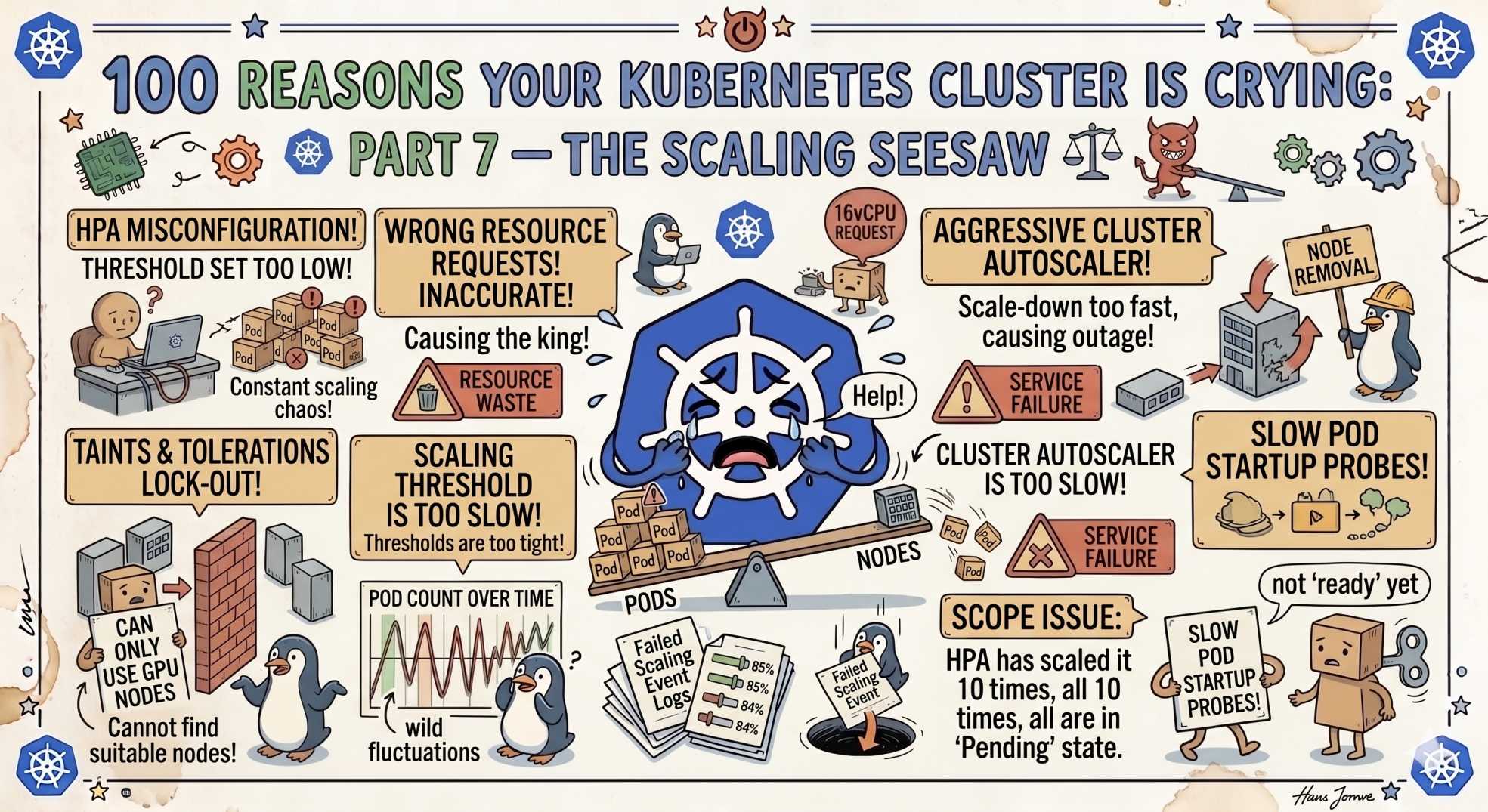

Rollouts stuck or autoscaling going wild? Part 7 reveals 10 Kubernetes scaling and deployment issues, from HPA failures and rollout stalls to probe misconfigurations and update strategy mistakes.

The team at DevOps Inside knows that once you’ve cleared the bouncers at the door (RBAC), your next challenge is making sure your app doesn't fall off the "Seesaw."

In Part 6, we escaped the "Permission Slip from Hell", those tricky security locks that keep your containers in solitary confinement.

But now that you have access, can you actually scale without breaking things?

Following our "From Pipelines to Prompts" series, we’re moving from the vault to the balancing act of deployments. If your rollout is stuck or your autoscaler is hyperventilating, your uptime is an illusion.

Here is Part 7: 10 reasons your cluster is sobbing over its scaling and rollouts.

61. Rollout Stuck: The Stalled Parade 🚧

You triggered a deployment. One pod updated. Then nothing.

The SRE Reality:

A new pod is failing readiness checks or is hitting an ImagePullBackOff. Kubernetes pauses the rollout.

The Fix:

Run:

kubectl rollout status deployment/<name>Check the latest ReplicaSet events.

62. HPA Not Scaling: The Broken Thermostat 🌡️

Traffic is rising, but pod count stays flat.

The SRE Reality:

HPA has no metrics to work with.

The Fix:

Run:

kubectl top podsIf this fails, your metrics server is missing or broken.

63. Deployment Loops: The Infinite Do-Over 🔁

Pods keep restarting in cycles.

The SRE Reality:

Probes are too aggressive, or dependencies are not ready.

The Fix:

Adjust probe timing. Let your app warm up before judging it.

64. MaxUnavailable Reached: The Empty Bridge 🌉

Your rollout caused downtime instead of smooth updates.

The SRE Reality:

Too many pods were taken down at once.

The Fix:

Set:

maxUnavailable: 065. Deployment Drift: The GitOps Gap 📉

Git says one thing. Cluster runs another.

The SRE Reality:

Your GitOps controller is out of sync, or manual edits broke alignment.

The Fix:

Re-sync your controller. Stop manual changes in production.

66. VPA Over-scaling: The Hungry Giant 🍽️

Your pod suddenly requests massive resources.

The SRE Reality:

VPA reacted to a spike and overcompensated.

The Fix:

Set limits:

minAllowed: cpu: 100mmaxAllowed: cpu: 267. HPA Flapping: The Nervous Yo-Yo 🎯

Pods scale up and down constantly.

The SRE Reality:

Thresholds are too tight.

The Fix:

Increase stabilization window:

stabilizationWindowSeconds: 30068. Orphaned ReplicaSets: The Ghost Versions 👻

Too many old versions are piling up.

The SRE Reality:

No revision history limit.

The Fix:

Set:

revisionHistoryLimit: 369. Immutable Field Error: The Concrete Wall 🧱

Your update fails instantly.

The SRE Reality:

You changed an immutable field.

The Fix:

Delete and recreate the deployment carefully.

70. Update Strategy Mismatch: The Total Wipeout 💥

Your service drops completely during deployment.

The SRE Reality:

You used Recreate instead of rolling updates.

The Fix:

Use:

strategy: type: RollingUpdate🤖 The AI Edge: Predictive Scaling vs Reactive Fear

In 2026, scaling is no longer reactive.

AI agents analyze patterns like:

- traffic spikes

- marketing events

- historical usage

They scale your cluster before demand hits.

Your system is no longer chasing load.

It is preparing for it.

⚡ Interactive SRE Challenge

Run:

kubectl get hpaIf any target shows <unknown>, you’ve hit issue #62.

🧠 The Verdict

Scaling only works if your inputs are correct.

Bad metrics, bad probes, or bad configs will break the Seesaw.

Kubernetes keeps its promise.

But only if you hold up your side.

🔜 What’s Next?

Stay tuned for Part 8, where we move from the Seesaw to the "Deep State", debugging control plane and API server issues.

💬 Quick question: What’s the most expensive scaling mistake you’ve ever seen? Did a VPA ever try to eat your entire cloud budget?

Let’s swap stories in the comments.

"Scaling is not about reacting faster. It is about understanding sooner. If your system only responds after the fire starts, you are already too late."