100 Reasons Your Kubernetes Cluster is Crying: Part 10 - The Future-Proofing Final 🚀

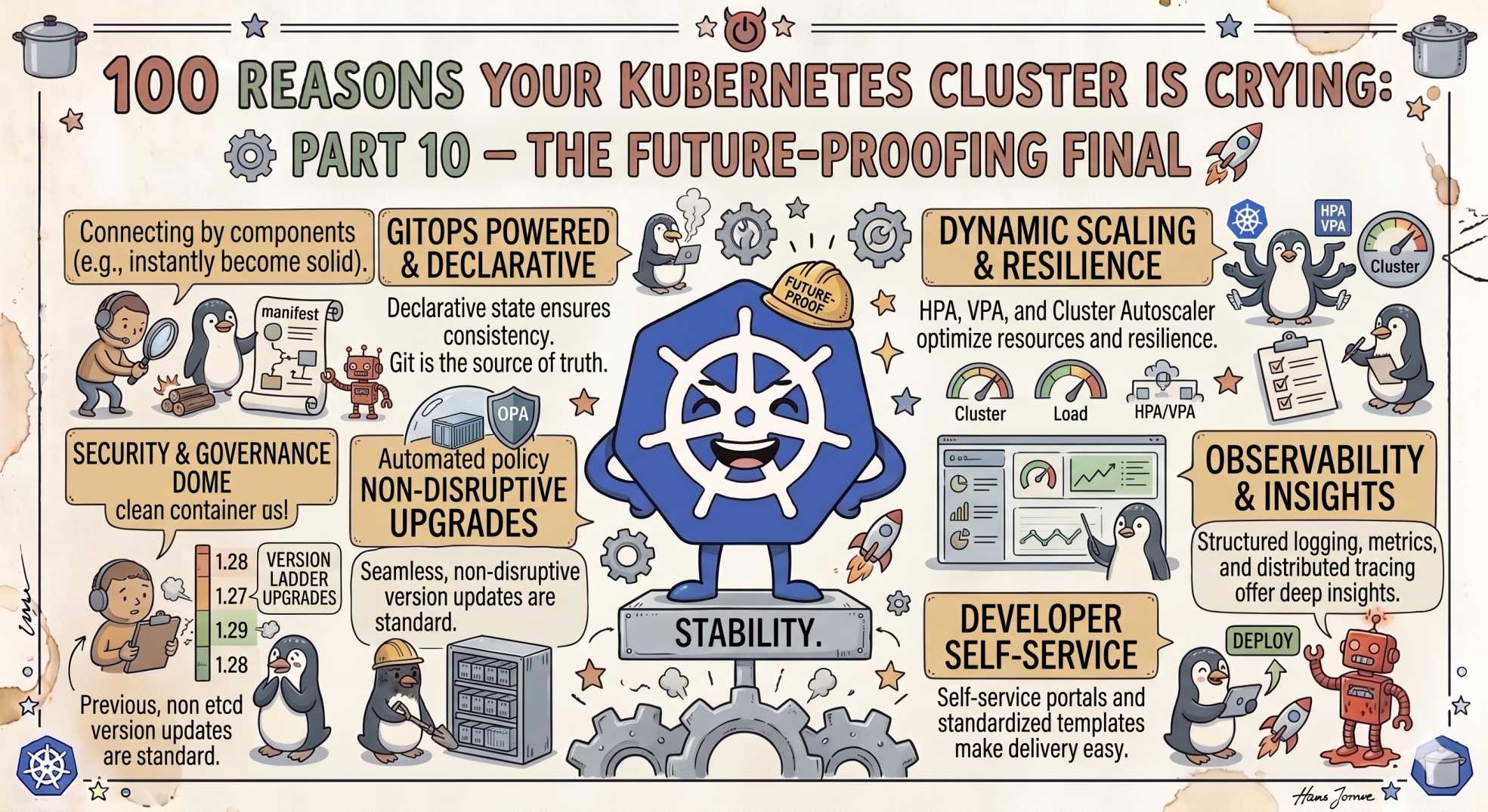

Manual fixes and constant firefighting are slowing your team down? Part 10 reveals 10 Kubernetes future-proofing challenges, from observability gaps and security drift to AI-driven operations and human error prevention.

The team at DevOps Inside knows that even if you’ve mastered the "Pressure Cooker" of node evictions we explored in Part 9, you’re still not playing the "End Game." A cluster that survives today might still fail tomorrow because it’s built on yesterday’s manual habits.

Following our "From Pipelines to Prompts" series, we’ve reached the final frontier. We’re moving from the physical stress of the node to the long-term health of your career and your infrastructure. If you are not building for a future of automated, AI-augmented operations, you are simply waiting for the next P0.

Here is the grand finale: Part 10, The final 10 reasons your cluster is crying for a smarter tomorrow.

🌌 In the SRE world, the goal is not just to "fix" the cluster

It’s to make sure the cluster does not need fixing in the first place.

Let’s finish this 100-point masterclass with the high-level strategies that separate the Firefighters from the Engineers.

91. Manual RCA Fatigue: The Detective's Burnout 🔍

You spend four hours grepping through logs to find a root cause that happened in three seconds.

The SRE Reality

Humans are not built to read millions of log lines. If your Root Cause Analysis (RCA) is always manual, you will never scale effectively.

The Fix

Move to Causal Observability. Use systems that automatically connect logs, metrics, traces, and events so the Why appears beside the What.

92. Cardinality Explosion: The Metric Monster 📈

Your Prometheus bill is now higher than your compute bill because someone added user_id as a metric label.

The SRE Reality

High-cardinality labels create a massive explosion of time-series data. Queries slow down, storage costs explode, and dashboards become unusable.

The Fix

Drop unnecessary labels at ingestion. Track only what is useful for alerting or debugging.

93. Silent Latency Spikes: The "Slow" Failure 🐢

The app is technically "up," but the latency is so high that users have already given up and closed the tab.

The SRE Reality

Traditional uptime checks miss the real pain. Tail latency destroys user experience long before complete outages happen.

The Fix

Define SLIs and SLOs around latency, especially P95 and P99 response times.

94. Over-Provisioned Ghost Towns: The Idle Tax 🏚️

You have namespaces consuming guaranteed resources even though nobody has touched them in months.

The SRE Reality

"Just in case" capacity quietly burns your cloud budget.

The Fix

Use Descheduler policies and cleanup automation to identify idle workloads and reclaim unused resources.

95. Security Drift: The Outlaw Change 🔓

Someone hot-fixed production using kubectl edit, and now Git no longer reflects reality.

The SRE Reality

If Git and the cluster disagree, your Infrastructure as Code has failed.

The Fix

Enforce GitOps with self-healing enabled using tools like ArgoCD or Flux.

96. Log Noise: The Static Void 📢

You are storing millions of "Heartbeat received" logs every single day.

The SRE Reality

Most logs are useless until an incident occurs, yet teams pay to ingest and retain all of them.

The Fix

Adopt dynamic logging levels. Keep systems quiet until anomalies appear.

97. Poor Trace Correlation: The Disconnected Map 🗺️

Your logs show "Database Timeout," but nobody knows which request caused it.

The SRE Reality

Logs without TraceIDs are disconnected fragments with no story.

The Fix

Adopt OpenTelemetry so logs, metrics, and traces share a common TraceID.

98. Documentation Rot: The Ancient Scrolls 📜

Your operational runbook was last updated in 2022.

The SRE Reality

Outdated documentation is often worse than having no documentation at all.

The Fix

Treat documentation like code. Store runbooks in the same repositories as deployments and update them through pull requests.

99. The 'Bus Factor': The Hero Bottleneck 🧠

Only one person truly understands how your kube-apiserver was configured.

The SRE Reality

If critical knowledge exists only inside someone’s head, the system is fragile.

The Fix

Automate everything. Build self-service platforms where operational knowledge is encoded into workflows and tooling.

100. The Final Boss: Human Error 💥

The number one cause of downtime is still a human typing the wrong command into the wrong terminal.

The SRE Reality

Every SRE has experienced the moment of realizing they were operating in production instead of staging.

The Fix

Add safety guardrails:

kube-ps1kubectl-safe- Protected production contexts

- CI/CD-only deployments

- Mandatory approval workflows

The safest production cluster is the one humans cannot accidentally destroy.

🤖 The AI Edge: The Agentic SRE

As we close this series, the biggest transformation happening in 2026 is the shift from Observability to Actionability.

AI agents are now trained directly on your team's historical incidents and post-mortems.

When a new outage resembles an old one, the AI does not just create an alert. It drafts remediation steps automatically.

Imagine this:

“This error pattern matches an incident from October 2024.

Updating this ConfigMap resolved it previously.

Would you like me to open a remediation PR?”

This is not AI replacing SREs.

It is AI becoming the ultimate operational memory system — one that never forgets a lesson.

⚡ Interactive SRE Challenge

Look at your last three production incidents.

Could an AI system have identified the pattern faster than your team did?

If the answer is "yes," it may be time to explore Agentic Observability platforms.

🧠 The Verdict

We covered 100 reasons your Kubernetes cluster is crying, from tiny YAML mistakes in Part 1 to organizational and operational failures in Part 10.

Kubernetes is not difficult because the technology is broken.

It is difficult because the scale of complexity is enormous.

But as we always say at DevOps Inside:

Complexity is just an opportunity for better automation.

Every recurring outage is a lesson.

Every manual task is a bug.

And every painful incident is an opportunity to build systems that are smarter than yesterday’s.

That is how teams move from merely surviving Kubernetes to truly mastering it.

💬 Quick Question: Which of these 100 reasons gave you the biggest "Aha!" moment? Or did we miss a hidden Secret Boss issue that still haunts your cluster?

Let’s celebrate, argue, and swap war stories in the comments.

And finally…

What topic should DevOps Inside tackle next?

"The best Kubernetes clusters are not the ones that never fail. They are the ones that learn faster every time they do."