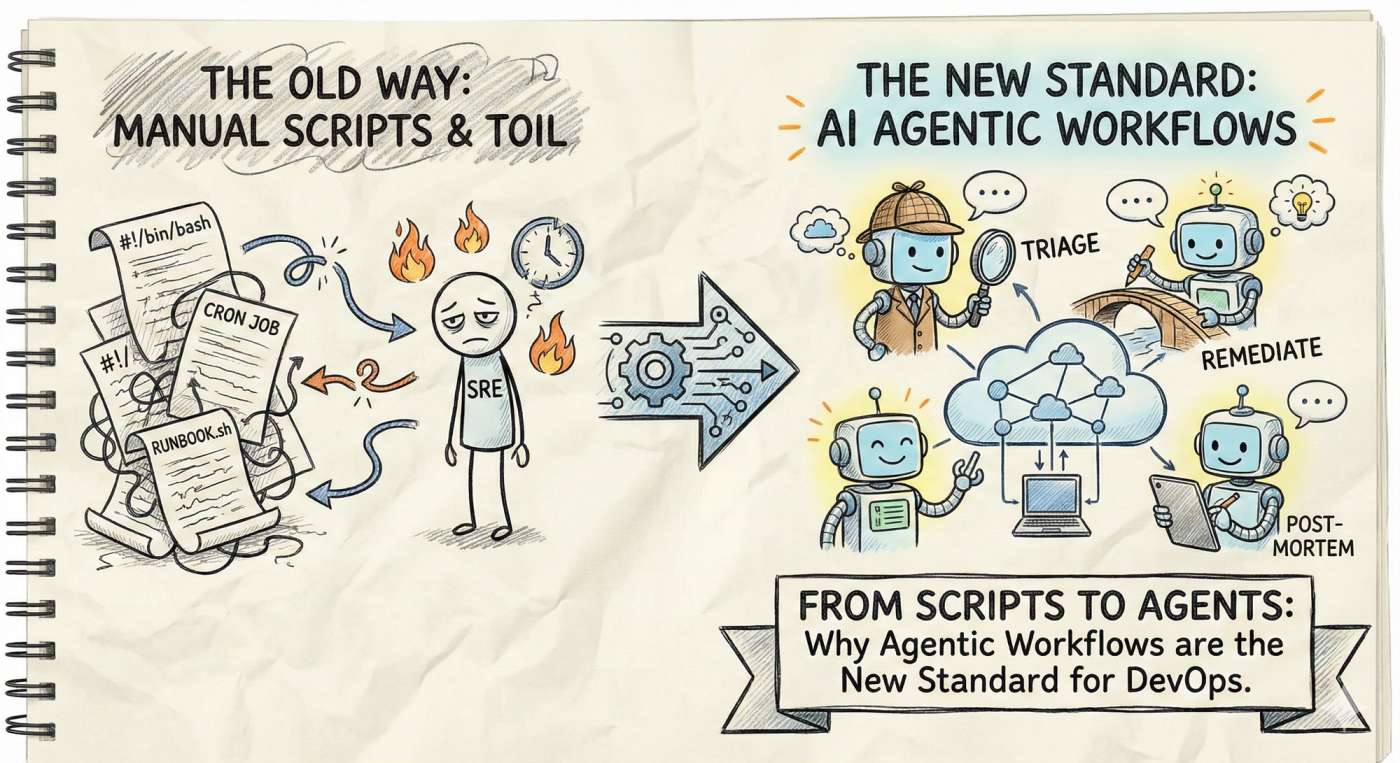

From Scripts to Agents: Why Agentic Workflows are the New Standard for DevOps in 2026.

DevOps used to run on scripts. Now AI agents can reason, troubleshoot, and act across your stack. Discover why agentic workflows are the new standard in 2026.

Picture this: It’s 02:13 AM on a Tuesday. Your pager is screaming, the dashboards are bleeding red, and the Slack "incidents" channel is exploding. You rub your eyes, open your laptop, and instead of fumbling through outdated runbooks, you simply ask your Platform Agent: "Triage the service-X outage and remediate if safe." While you sip your tea, the agent correlates logs, restarts a stuck container, verifies the fix, and drafts a post-mortem for you to review when you’re actually awake. ☕🤖

This isn’t sci-fi; it’s the reality of agentic workflows. In 2026, we’ve moved past simple scripts. We are now in the era of autonomous agents that amplify SRE teams and turn "firefighting" into "good engineering."

What Exactly Are AI DevOps Agents?

Think of a traditional script as a train: it follows a fixed track. If there’s a rock on the tracks (an unexpected error), it just crashes.

An AI DevOps Agent is like a self-driving car. It has a destination (your goal), but it perceives the environment and makes decisions to get there safely.

Unlike a cron job, these agents:

- Understand Intent: You give a goal ("Optimize our cloud spend"), not a command ("Delete idle EC2 instances").

- Act Autonomously: They plan multi-step workflows across Kubernetes, CI pipelines, and Cloud APIs.

- Adapt on the Fly: If a rollout fails, they don't just stop; they analyze why and decide whether to rollback or retry with a fix.

The Difference in a Nutshell:

- Traditional Automation: "If X happens, do Y." (Predictable, but brittle).

- Agentic Workflows: "Achieve Y by reasoning through X." (Dynamic and contextual).

Why You Can’t Ignore This in 2026

Cloud complexity has hit a breaking point. With microservices everywhere and ephemeral infrastructure moving faster than we can track, manual SRE work doesn't scale. Here is why agents are now a "must-have":

- Slash MTTR: Agents don't need 10 minutes to "wake up" and find the right dashboard. They triage in seconds.

- Scale Tribal Knowledge: You can "teach" an agent how your best senior engineer handles a database lock. Now, your junior engineers have that expertise available 24/7.

- Kill the Toil: Automation for restarts, rollbacks, and right-sizing becomes "set it and forget it," letting you focus on architectural design instead of clicking buttons. 🎯

- Continuous Governance: Instead of a weekly security scan, agents act as "digital police," instantly fixing security drift the moment it happens.

Real-World Use Cases (The "Day in the Life")

| Scenario | The Old Way (Manual / Scripted) | The Agentic Way |

|---|---|---|

| Incident Triage | You manually grep logs and check Grafana. | Agent correlates logs, identifies the “poison pill” PR, and suggests a rollback. |

| Cloud Costs | A surprise ₹5,000 cloud bill at the end of the month. | Agent finds a zombie dev cluster and asks permission to shut it down. |

| Deployment | You watch a CI/CD pipeline for 20 minutes. | Agent monitors canary traffic, detects latency spikes, and auto-diverts traffic. |

How It Works: The "Brain" Behind the Ops

You don't just give an AI a root password and pray. A safe agentic architecture looks like this:

- The Trigger: A human command or a Prometheus alert.

- Context Retrieval: The agent "reads" your runbooks and current infra state (this is why RAG—Retrieval-Augmented Generation—is vital).

- Reasoning: An LLM creates a step-by-step plan.

- Guardrails: A policy engine (like OPA) checks: "Is the agent allowed to delete this?"

- Execution: The agent calls the API.

- Feedback: It checks the metrics. Did the fix work? If yes, close the ticket. If no, escalate to a human.

The Golden Rules of Safe Autonomy

Autonomy without guardrails is a disaster waiting to happen. Follow these principles to sleep soundly:

- Least Privilege: Give your agent a "spoon," not a "chainsaw." Use scoped service accounts. 🍴

- Human-in-the-Loop: For high-risk actions (like deleting a production DB), always require a human "thumbs up."

- Explainability: The agent must log its "thought process." If it makes a change, it should tell you why.

- Idempotency: Every action should be safe to run twice.

Your 30/60/90 Day Agent Roadmap

Don't try to automate everything at once. Start small and build trust.

Day 0–30: The Prototype Phase

- Identify Toil: Find the top 3 annoying alerts that pop up every week.

- Build a "Read-Only" Agent: Build an agent that only triages. It gathers logs and suggests a fix, but doesn't touch anything.

- Measure: Track how much time you save just on investigation.

Day 31–60: The "Safe Action" Phase

- Automate Low-Risk Ops: Let the agent handle simple tasks like restarting unhealthy pods or cleaning up old snapshots.

- Add Guardrails: Implement RBAC and "Dry-Run" modes.

- Tabletop Drills: Try to "trick" your agent in a dev environment to see if your guardrails hold.

Day 61–90: Scaling Up

- End-to-End Remediation: Move to auto-rollback for failed deployments.

- Agent Registry: Start sharing these agent modules across different teams.

- KPI Check: Compare your current MTTR (Mean Time to Repair) to your pre-agent days. The numbers should speak for themselves. 📈

Final Thoughts: Train the Agent, Don't Fear It

AI Agents aren't here to replace SREs; they are here to replace the boring parts of being an SRE. They turn us into "Agent Orchestrators."

Treat your agents like a smart intern: give them clear instructions, set strict boundaries, and give them the context they need to succeed. When done right, they’ll handle the midnight fires so you can stay in bed.

Let’s build the future of reliable ops together with DevOpsInside.com. 🚀

Happy automating, and may your alerts be few and your agents be trustworthy! 😄